Unconscious bias training is a waste of time.

Human decision making is prone to unconscious bias, and many of us are aware of the pernicious effects of implicit bias in the workplace. However, this cannot be improved solely by companies throwing money at the problem with surface-level diversity & inclusion efforts. Bias can be removed from decision making, but as this article will explain, unconscious bias and diversity training isn’t the way to do this.

The rising popularity of implicit bias training

If you look at what's happened to the Google results for unconscious bias training, you can see that it has spiked.

As the Black Lives Matter movement took off in its current form, you can see that there was a huge increase in searches particularly in the United Kingdom, Australia, United States and Canada.

It’s also been making headlines here in the UK.

In December 2020, the government released a statement announcing that “unconscious bias training would be phased out in [government] departments".

To many people, this sounded like a significant step backwards and a general lack of interest in improving diversity...

The truth is: the evidence around the effectiveness of unconscious bias training seems to suggest that it doesn't actually work.

Before we dive into the evidence behind this claim, it’s worth briefly covering what unconscious bias (and training to prevent it) entails...

What is unconscious bias?

Anyone with some level of familiarity with diversity & inclusion is likely to be familiar with the term ‘unconscious bias’. The term refers to prejudices that we may be aware of but are out of our direct control. These tend to stem from stereotypes that are formed by our backgrounds, cultures, and personal experiences.

It’s worth noting that unconscious bias is natural and very much part of our psychological makeup. But, it can hinder our attempts to make fair, objective judgments.

Here are some of the most common types of unconscious bias:

Perception bias – This is when we believe something is typical of a particular group of people based on cultural stereotypes or assumptions. This leads us to make reductive and overly simplistic generalisations of diverse groups of people.

Affinity bias – When we feel as though we have a natural connection with people who are similar to us, this can lead to 'affinity bias', which overrides our objective perception of their skills, behaviours and character.

Halo effect – The term 'halo effect' describes how we project positive qualities onto people without actually knowing them. This can prevent us from making objective assessments of a person's values and abilities. In comparison, a negative first impression can lead us to continually associate people with negative traits, despite any evidence to the contrary. This is called the 'horn effect'.

Confirmation bias – As human beings, we like to believe that we are good judges of character, and often put a lot of trust in our own instincts. Therefore, we tend to try and confirm our own opinions and pre-existing ideas about a particular group of people. The term 'confirmation bias' describes how we overlook evidence that contradicts these pre-existing ideas.

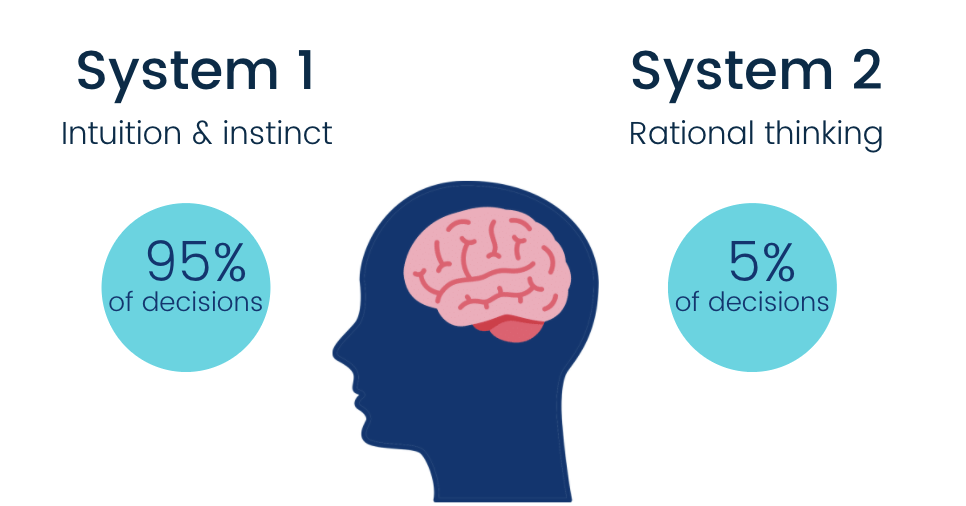

In Daniel Kahneman’s ‘Thinking Fast and Slow’ he explains how our brains work using two systems.

System one describes how we make mental shortcuts as part of fast, intuitive and emotionally-driven decision making. This is crucial to our ability to operate and actually function in the world. In comparison, system two is the more slow, conscious and effortful thinking we do when making big, infrequent decisions.

Unconscious bias occurs when we should be using system two but instead fall back on using system one. We rely on mental shortcuts and quick-fire associations to make decisions that should be more conscious and methodical. This leads to the assumptions and generalisations that are often at the root of biased behaviour.

Interested in unpacking this a little further? Take a look at our comprehensive guide to unconscious bias before finding out more about how Applied's recruitment platform eliminates unconscious bias for fairer and more inclusive hiring decisions.

What is unconscious bias training?

Unconscious bias training (also known as implicit bias training) seeks to raise awareness around the mental and cognitive shortcuts that cause us to quickly make (often misguided) assumptions about a person’s ability or character. It usually involves a series of exercises and assessments designed to identify examples of biased thinking. The Holy Grail of these tests is the Implicit Association Test, spearheaded by Harvard University.

Bias training dates back to the 1960s, a period defined by social movements where many of our current anti-discrimination laws came to pass. Now, you would think that it was civil rights activists who called for the introduction of bias training.

However, the unfortunate reality, as pointed out by Tidal Equality, was that it was actually a corporate response to anti-discrimination law, helping companies (who previously had free rein to discriminate) avoid legal action. This is ironic to say the least, given the role of bias training in today's diversity & inclusion efforts.

Here’s an official definition from the UK Human Rights Commission:

“UBT are sessions, programmes or interventions that aim at raising awareness and/or teaching methods to alleviate unconscious (implicit) biases. These biases are views or opinions toward other people that we are unaware of and that are automatically activated and frequently operate outside our conscious awareness.”

So if you think about the examples of unconscious bias we just looked at, the training is actually only aiming at dealing with a very specific type of unconscious bias - and that's stereotype bias - the stereotypes and assumptions we have about particular groups.

There are over 200 or so cognitive biases that can interfere with recruitment processes and people's decisions but unconscious bias training doesn’t tackle most of them.

For example, most training doesn't cover subjects such as the 'peak-end effect' (we remember the first and the last people we interview, we don't seem to remember the people in the middle) or recency bias.

What does unconscious bias training look like?

So, let’s take a look at what bias training actually entails…

The standard technique is to start with an unconscious bias test. This test tells you how much implicit bias you have and how many of these stereotypes or hidden assumptions you have in your psyche. The Harvard Unconscious Bias Test, for example, will ask you to look at white faces and black faces. You’ll then have to match the faces with various positive and negative concepts, which should then determine how much implicit bias you have.

The rest of the training tends to look like this...

- Unconscious bias 'test' (e.g. Implicit Association Test)

- Unconscious bias 'test' debrief - explaining to participants what the results mean

- Education on unconscious bias theory

- Information on the impact of unconscious bias

- Suggested techniques for either reducing the level of unconscious bias or mitigating its impact

How much does diversity & inclusion training cost?

The diversity & inclusion training market was recently valued at nearly $8 billion annually.

Google alone spent $114 million on diversity-related programs in 2014.

According to Time Magazine, almost 20% of companies in the United States offer training to tackle unconscious biases in the workplace.

A 2017 survey found that 35% of hiring decision-makers in the UK intended to increase their investment in diversity initiatives.

It’s an undeniably lucrative market...

But the question remains whether diversity training actually provides effective solutions to workplace bias.

On the surface, the C-Suite will eagerly buy into unconscious bias training, not least because a diverse and inclusive workforce can boost the bottom line.

However, when we look at ROI in terms of actual behaviour change, the results are mixed at best.

Evaluating unconscious bias training exercises

Before looking at what the research has to say, let’s remind ourselves of what unconscious bias training actually claims to do.

The first is raising awareness - just making people aware that they have implicit biases and that discrimination is a problem in the workplace.

Then there's reducing implicit biases and addressing explicit biases:

Implicit biases are the stereotypes and assumptions we don’t even realise we have.

Explicit biases are the very much intentional and open prejudices people may have.

The last claim that bias training makes is around changing behaviour.

Raising awareness

In terms of raising awareness, there’s evidence that suggests bias training does successfully shine a light on the issue of bias and the outcomes it leads to.

And whilst most academic literature tells us that awareness alone doesn't lead to behaviour change, it is a necessary step in the process of change.

Here are a few of the key studies around bias training's impact on awareness:

Hausmann et al. (2014): showed self-reporting increases awareness of unconscious bias.

Carnes et al. (2015): found that bias training not only raised awareness but also motivation to remedy the problem.

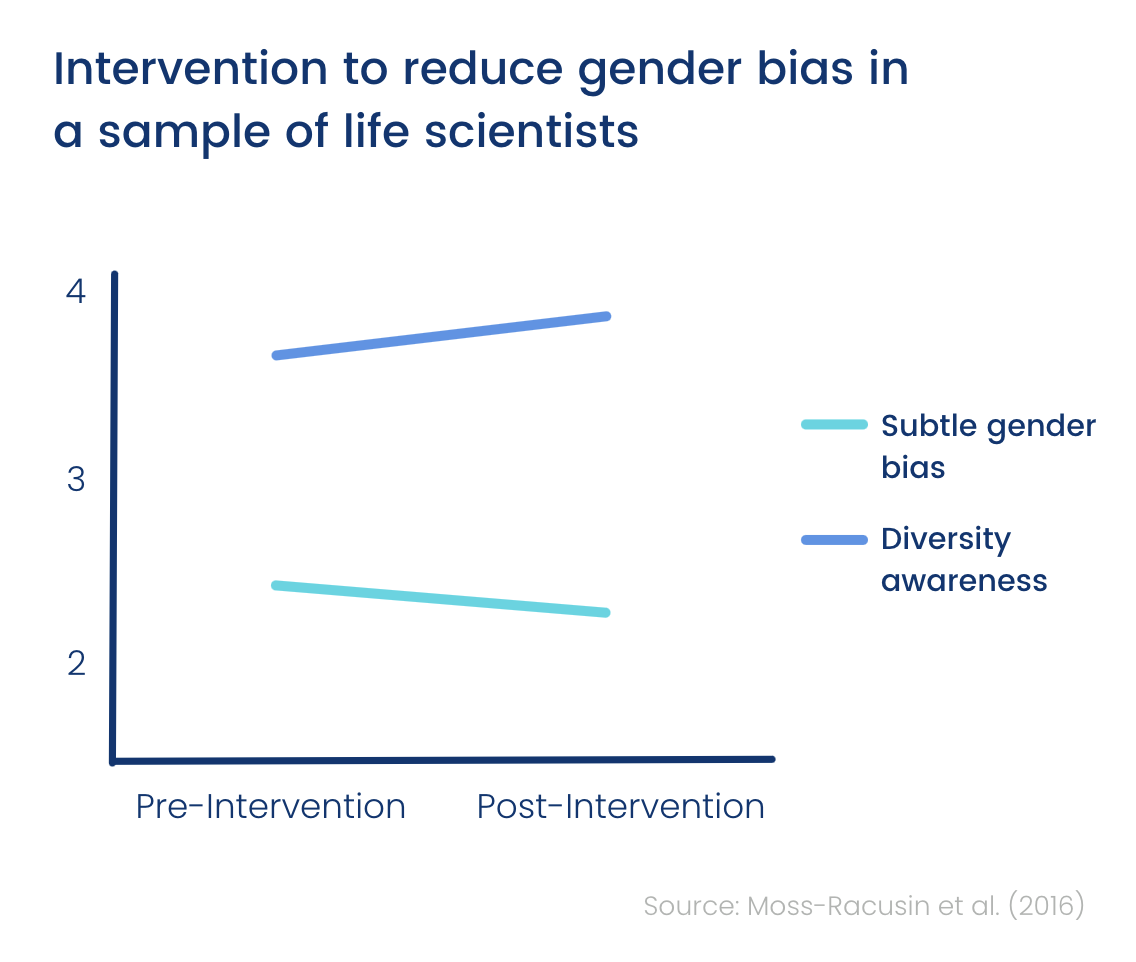

Moss-Racusin et al. (2016): found that bias training heightens the ability to detect the gender diversity of an environment. Those involved in the training were then more sensitive to the diversity balance of their team and the wider organization.

Conclusion: unconscious bias is fairly effective at raising awareness around diversity issues

Reducing implicit bias

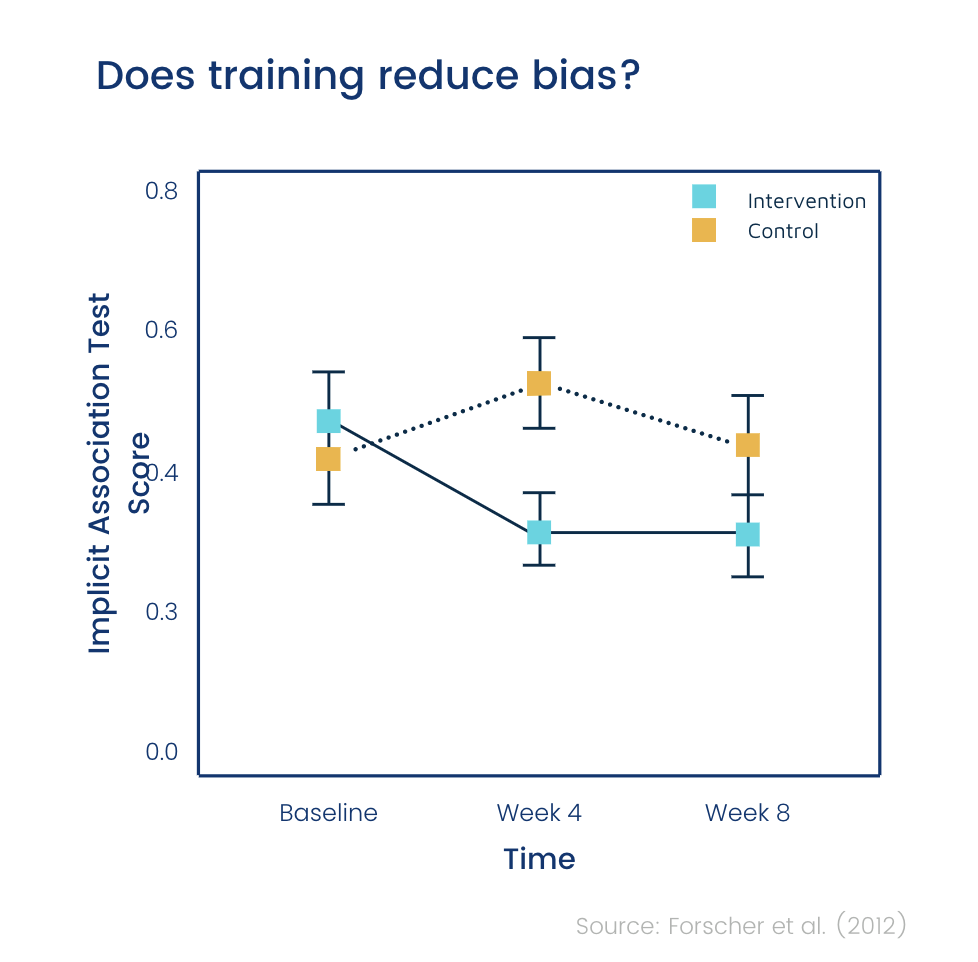

When it comes to reducing implicit bias, the evidence is mixed.

The most positive evidence comes from a 2012 study.

Researchers found that there was some reduction in racial preference after eight weeks, according to an implicit bias test with 91 participants.

However, a meta-analysis of 426 studies (involving more than 80,000 participants) found that although there was a reduction in bias immediately after training (albeit a very slight reduction), this disappeared over time.

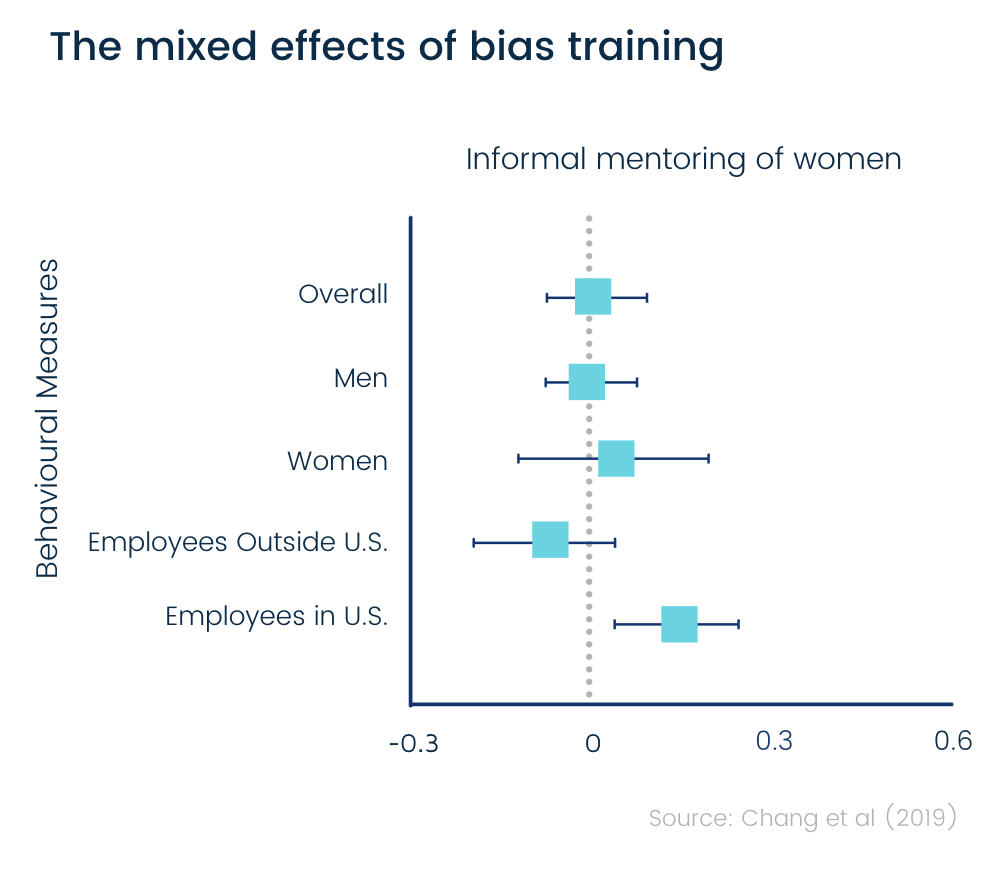

In another study, over 10,000 employees from a large multinational company were invited to participate in “inclusive leadership workplace training.” The 3000 respondents were put in one of either two training groups and a control group. The training was a 68-minute long online course combining gender bias training with general bias training.

Researchers followed up the training exercises in two ways. First, they sent out a survey to gauge ‘attitudes’ towards diversity. They then began measuring responses to a series of unconnected workplace initiatives. These included nominating a fellow employee to be recognised for their performance, volunteering to mentor other employees, and offering guidance to new hires.

The study found that there was a measured shift in both attitudes and behaviours amongst women and ethnic minorities.

However, there was only a marginal change in white men.

It seems people who may feel diversity was a personal issue felt more compelled to implement what they had learned.

The results seem to justify any scepticism about unconscious bias training effectiveness.

Later in this article, we will show you how to reduce the impact of implicit bias by making structural changes to your hiring process, rather than trying to make changes to people's psychology.

Conclusion: it seems that training can reduce implicit bias in some people for a period of time - up to 8 weeks - but effects or temporary, and implicit bias is never reduced to zero.

Reducing explicit bias

So how about the stereotypes that people hold consciously and actively?

The most promising evidence for training's effect on explicit bias comes in the form of a 2014 study, measuring bias towards women in STEM. The training was found to have a positive effect on men’s personal implicit associations toward women in STEM. However, endorsement of explicit gender stereotypes was not reduced after a UBT intervention for men who held these explicit attitudes pre-training.

Other studies around this effect explicit bias are a lot more damning -

In one study, white subjects read a brochure critiquing prejudice towards black people. When they felt pressured to agree, the reading actually strengthened bias against black people.

And a third study even reported animosity, anger and resistance towards mandatory training.

Conclusion: when it comes to explicit bias, it's pretty safe to say that unconscious bias training doesn't work - and can even make things worse.

Changing behaviour

And now for the most crucial part - does unconscious bias change behaviour?

Does it have any tangible, real-life impact?

Despite having been around since the ’60s, bias training’s effect on observed behaviour just hasn’t been measured enough.

There has been some research on its effectiveness in terms of behaviour change, so let’s dig into what we have…

A study of 829 companies over 31 years showed that bias training had no positive effects in the average workplace.

This study looked specifically at the progression of women, progression of minorities and the percentage of women and minorities in managerial positions.

It’s not looking directly at how behaviours changed as the result of the unconscious bias training... because no one's been measuring that.

However, what has been shown is that despite having extensive unconscious bias training, nothing substantial has happened in terms of diversity & inclusion.

What the evidence does suggest is that simply making people aware of their biases doesn't actually change their behaviour.

If we look at one of the biggest studies on behaviour change (the chart above), you can see that the companies focused on diversity training actually saw less diversity in terms of black women in management positions.

Perhaps the most rigorous study of unconscious bias training delivered an hour-long diversity course to thousands of employees at an international professional services company.

Following the session, participants reported improved acknowledgement of their own biases and greater support of women in the workplace.

However, this didn’t translate into lasting behavioural change.

Three weeks after taking the course, those who had taken the diversity course were no more likely to take on a female mentee.

Six weeks after taking the course, participants were also found to be no more likely to nominate a female colleague for recognition of their “excellence”.

Conclusion: there's not much evidence that unconscious bias training works, although there is evidence that shows it has little to no effect on outcomes.

Unconscious bias training could even backfire

Bias training can actually backfire in lots of ways.

When we looked at explicit bias, we saw how people can react badly and become more resentful when confronted with their biases, but there are more subtle effects at play too…

Moral licensing is when we believe we can balance out our less moral actions because we have been good in the past.

People who've been given vitamins are more likely to smoke and skip exercise that evening - because you've done a good thing (taken vitamins), you feel like you earned your bad thing (smoking).

A study found that when given the opportunity to endorse Barack Obama (back in 2008), people were then more likely to discriminate against African Americans.

And when subjects are told that their employers have pro-diversity measures, such as training, they presume that the workplace is free of bias and react harshly against claims of discrimination.

Training seems to result in unrealistic confidence in anti-discrimination programs, making employees complacent about their own biases.

So when it comes to bias training, there’s a strong possibility that participants will feel that they’re actually less susceptible to it - when the idea of the training is to show people that they are in fact prone to bias.

Given that people generally tend to rate themselves as less biased than others (a concept known as The Bias Blind Spot), training could cause people to make more decisions that are contrary to the practices it advises.

How to measure the effectiveness of unconscious bias training

Since bias training’s impact on behaviour hasn’t been properly measured up until now, how can organizations follow up to ensure that what has been taught is being implemented?

How can we bridge the gap between awareness and actual behaviour?

Well, for starters, employees would have to flag up every time they have a thought that could be biased. Then they’d have to ensure that they replace them with more realistic, well-rounded views.

Firstly, in a busy workplace setting, this doesn’t sound particularly practical. Secondly, for this to work in the first place the employee would have to be conscious of their unconscious bias.

Furthermore, if we propagate the idea that unconscious bias training is a magic bullet solution, hiring managers and recruiters could feel as though they don't have to second-guess their decisions.

There is even evidence to suggest that encouraging awareness of unconscious bias could hinder diversity initiatives. A study carried out in 2000 sought to measure ‘effects of thought suppression on evaluations of older job applicants.’

Participants first watched a series of videos about diversity. They were then asked to evaluate a series of job applicants. Those who were instructed to ‘suppress demography-related thoughts’ surprisingly rated older applicants less positively than their younger counterparts. If you’re interested, we recently published a blog post examining ageism and recruitment.

According to the Journal of Organizational Behaviour, the results suggest that ‘instructions to suppress stereotypic thoughts may have detrimental effects [...] if raters are cognitively busy when they implement these instructions.’ They referred to this effect as an ‘ironic evaluation process.’

Does implicit bias training work? Putting it all together

Key takeaways from the research around bias training:

- There is rigorous evidence that unconscious bias training can lead to a lasting, significant shift in behaviour.

- The maximum impact of bias training is a relatively small reduction in implicit biases.However, this will only last a few days at least, or eight weeks at the maximum.

- There is evidence that the training can be counterproductive and detrimental to morale.

So, does implicit bias training work? The short answer seems to be no.

However, these findings don’t necessarily mean that bias training is useless and should be completely disregarded.

What they do tell us, is what is actually possible to achieve through training alone.

If you’re thinking about using it as your primary strategy, you might want to consider the expense versus the potential results.

Frank Dobbin - a critic of diversity training in general - pointed out that for Starbucks to actually get everyone trained, they’d need to close 1000 stores for half a day in order to train 175,000 workers (at an estimated cost of $12,000,000).

Starbucks hires 100,000 new workers each year, so they’d need to do a dozen half-day sessions every year.

Starbucks would be reducing implicit bias at a cost of $72 million a year.

And this is how corporations manage to spend $8 billion a year on diversity training!

It's not that bias training isn’t useful whatsoever, it's just not a cost-effective or easily measurable means of reducing bias.

There are things you can do significantly cheaper that will have a far greater impact, like investing in a recruitment platform that eliminates unconscious bias from the very start of the hiring process.

What is wrong with unconscious bias training? Bias can only be removed by design

We can all agree that workplace discrimination exists. However, no-one wants to admit that they have prejudices.

Simply being aware of unconscious bias can only be so effective. While it may be effective at raising awareness around discrimination, unconscious bias training doesn't work when it comes to actually fixing the issue itself.

People may be aware that racism exists. However, this doesn’t help them unlearn societal preconceptions about race.

So, if unconscious bias training has so many flaws, what alternative is there to create a more diverse workforce?

Talking about the problem and throwing stats around doesn’t bring about real change.

Even well-intentioned diversity & inclusion professionals are naive in thinking they can change companies by preaching - without changing the system itself.

Instead of simply telling people about their biases and hoping that they actively re-train what is essentially their own nature, why not just design systems that make bias impossible?

That’s where behavioural science comes in.

In short, behavioural science studies human activities to find patterns in the way we behave. When it comes to bias, a behavioural science approach would accept that simply being told not to be biased doesn’t have an effect on how we act, and would instead seek to find a solution that actually changes habits.

Change environments, not people

If you look at examples of how bias has been reduced successfully, it’s not by trying to change people.

It's by changing environments.

If debiasing someone is in fact possible via training alone, it would take consistent and long-term effort, perhaps years!

What behavioural scientists do instead is create an environment which makes the right choice easy through ‘choice architecture’ - the way in which choices are presented to us.

The most famous example of choice architecture - and perhaps the most relevant to unconscious bias training - involves an orchestra. Back in the 70s, researchers managed to double the number of women getting through auditions by introducing blind auditions.

Since debiasing the reviewers would take years of training, researchers changed the audition process itself. So, when it comes to removing bias, it's much better to rearrange the environment in which people make choices, rather than attempting to change human nature.

Applied: A more effective way of removing bias from hiring...

Here at Applied, we’ve built an anonymous recruitment platform that makes it nearly impossible for bias to affect decision making.

Rather than just tell hirers to try and be completely objective, why not anonymise applications altogether so that they have no choice but to be objective?

By removing unconscious bias from your hiring process, diversity will naturally improve - no training needed!

Bias starts at the screening stage

The typical screening process is rife with unconscious bias.

The more we know about a candidate, the more grounds for bias there is.

According to a comprehensive study of hiring outcomes here in the UK, candidates from minority ethnic backgrounds have to send 80% more applications to get the same number of callbacks as a White-British person.

This bias doesn’t just apply to ethnicity - your gender, social class, age etc can all affect your chances of being hired or promoted (you can skim our Recruitment Bias Report for more studies like the one above).

The problem with unconscious bias training is that it looks to debias people… but why not just remove the information that triggers bias in the first place?

Anonymization is a solid first step towards de-biasing hiring, but this alone probably isn’t going to drastically improve diversity if you’re still using CVs.

Why? Because even once someone’s identity is hidden, the rest of the information on their CV still paints a vivid picture of their background (but not their ability).

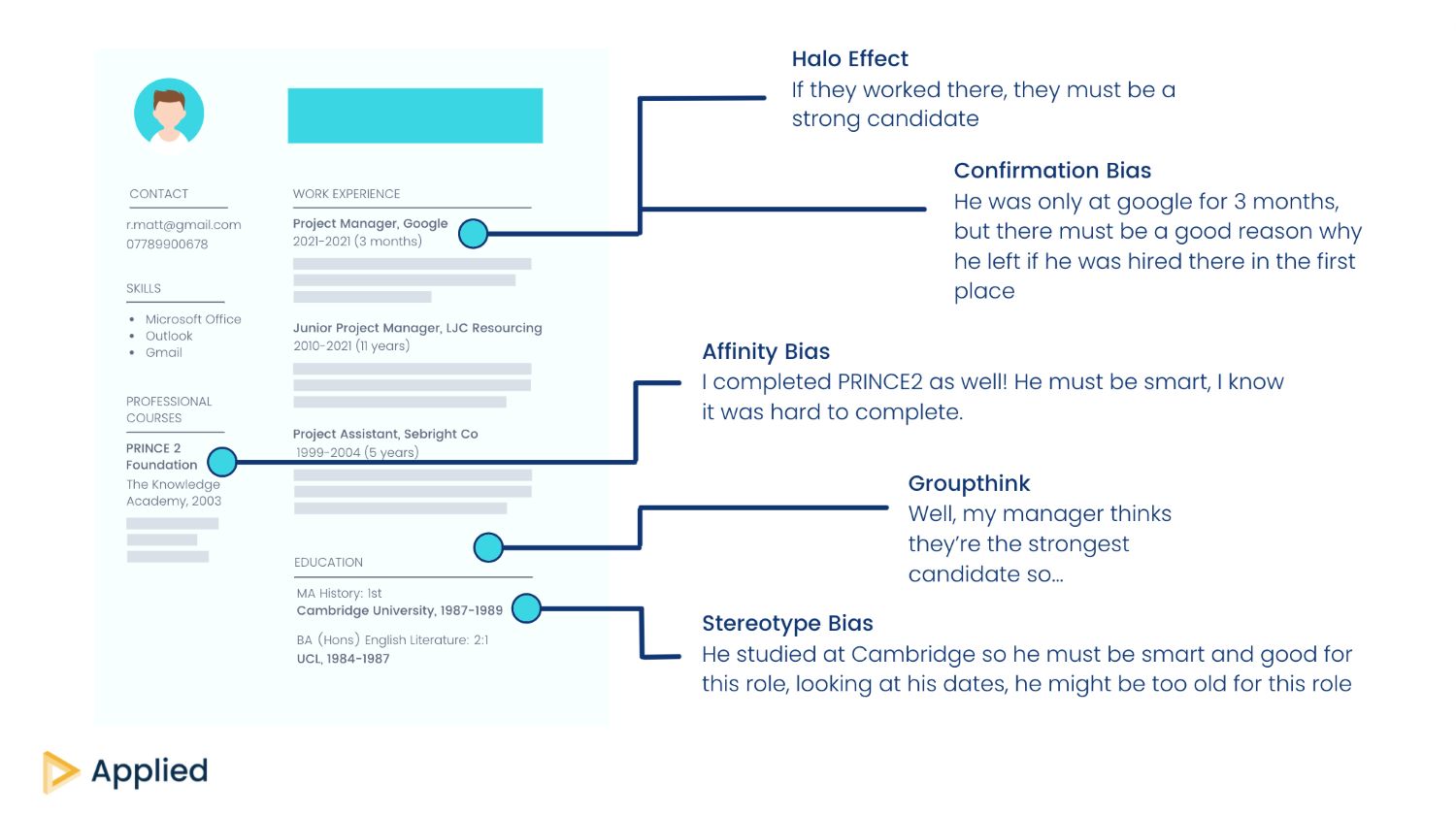

Below you can see just some of the biases triggered by a CV.

Whilst removing obvious identifying information may reduce some biases, we know that those from minority backgrounds have a harder time in the hiring process and may therefore be less likely to have experience at well-known organizations.

By over-indexing on education and experience, you’re likely to find the same diversity gaps being perpetuated, even with an anonymous process.

Using work samples instead of CVs

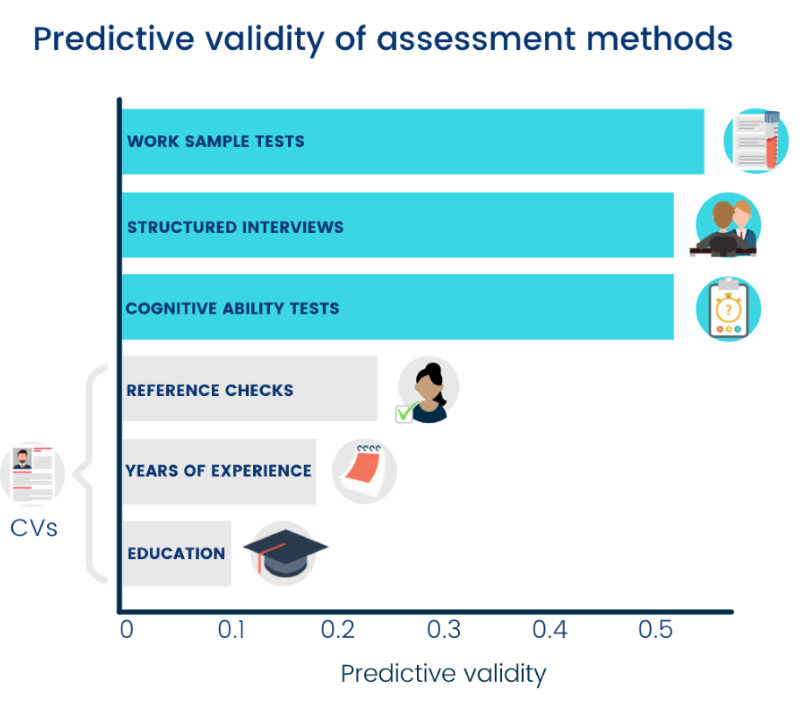

So if we’re ditching CVs, what should we be using instead? Well, take a look at the results of this metstudy below, testing the predictive validity of assessment methods...

As you can see, the most predictive assessments are known as ‘work sample tests.’

Spoiler alert: this is what we use at Applied and we’ve found that 60% of people hired through this process would’ve been missed via a typical CV screening.

Work samples are similar to situational-style interview questions, except they pose scenarios hypothetically.

A CV looks at someone’s background, and hirers attempt to guess whether or not this background would foster the necessary skills.

Work samples, on the other hand, test for skills directly.

Sure, decades of experience might mean that a candidate gives the best answers, but this isn’t always the case. We’d rather give candidates a chance to showcase their skills rather than discounting them based purely on their background.

The idea of work samples is to simulate the job. You’re essentially asking candidates to either perform or explain their approach to a task that they would likely have to carry out should they get the job.

What could be more predictive than getting candidates to think as if they were already in the role?

Here’s a real-life example we used for an Account Manager role:

You've been given 50 accounts to manage, ranging from newly onboarded users to long term customers of Applied. They vary in terms of size, industry and knowledge of the Applied platform.

Your manager asks you to come up with a plan for how you will focus your time to maximise the growth of your accounts over the next 6 months.

What things would you consider in putting this plan together? How would you measure your success? Is there any other information you would need?

Skills tested: Accountability, Prioritization Data-driven

As you can see, this work sample question is very much reflective of what the job would actually entail. Whilst those with experience may be best equipped to answer this, it doesn’t necessitate having a specific background, if you have the right transferable skills, you can give a great answer.

To create your own work samples, follow these steps:

- Decide 6-8 core skills required for the role.

- Think of a realistic scenario or task that would test at least one of these skills.

- Pose the scenario hypothetically, asking candidates what they would do.

You can see more examples via our Work Sample Cheatsheet.

Once submitted, we put the work sample answers through the process below to eliminate lesser-known biases like ordering effects.

Chunking: Each application is sliced up by the question. Instead of looking at all of Candidate 1’s answers, then Candidate 2’s, you’d look at every candidate answer to Question 1, then 2 etc (this is to avoid the halo effect).

Randomization: Now you’re reviewing question by question, we’d recommend randomising the order they’re viewed in for each question since we know applications viewed first tend to be favoured (recency bias).

Scoring criteria is essential

When it comes to reviewing work samples, you’ll need a set of criteria for each question.

This doesn’t need to be anything too detailed, we’d recommend a simple 1-5 star scale, with a few bullet points describing what a good, mediocre and bad answer would include.

Here are the criteria we used for the work sample above:

1 star

- No reference to different ways to segment customers

- Not clear what success measures would be

3 star

- Reasonable suggestions for segmenting customers but too focused on one dimension (e.g. MRR without a different strategy for a brand new vs tenured customers)

- Success metrics focused only on increased revenue and not thinking about churn or customer satisfaction

5 star

- Understands there are multiple ways to segment a book of business and acknowledges that current value may not match future potential

- Suggests success metrics with multiple dimensions e.g. revenue growth, limited churn and customer satisfaction

Criteria ensure that candidates are being scored against the actual skills required for the role, rather than the reviewer’s own biases.

It also means that you can get other team members involved in the hiring process.

To average out the biases of individual hirers, have three team members review candidates’ work sample answers.

Not only is this an effective means of removing bias, but it’ll also give you more accurate scores due to a phenomenon known as ‘crowd wisdom’ - the general rule that collective judgment is more accurate than that of an individual.

P.S. You can read up on our interview process here.

Applied is the essential platform for debiased hiring. Purpose-built to make hiring empirical and ethical, our platform uses anonymized applications and skill-based assessments to identify talent that would otherwise have been overlooked.

Push back against conventional hiring wisdom with a smarter solution: book in a demo of the Applied platform.

Applied offers a range of additional resources to help companies embrace an ethical and empiric approach to hiring. For example, we've used data from over 100,000 applications to rank the top diversity job boards, so you can focus on channels that deliver a diverse range of candidates.

.png)

.png)